Abandon Your University Rankings, It's a Flawed System

Institutions are dropping out of the U.S. News & World Report, Times Higher Ed and QS rankings. As existing scores may exacerbate inequity across the globe, change has never been so important.

If you’ve never questioned university rankings, then this newsletter may come as a shock. If, on the other hand, you’re already familiar with the drawbacks of such measures, you may still end up with the question many of us have: what can we do instead?

In light of the recently released QS rankings, which resulted in the mass boycott by all South Korean universities, we’re diving into the topic. We’ll breakdown exactly what the key issues are with global university rankings and how they reinforce academic inequity, as well as the most promising solutions to date.

Our thanks go out to meta-researcher and statistician Adrian Barnett, who we interviewed to help co-create this edition of The Scoop.

“[Ranking is based on] dodgy data and rubbish evidence, yet it’s extremely influential!” - Adrian Barnett, Professor of Statistics, QUT

What are university rankings?

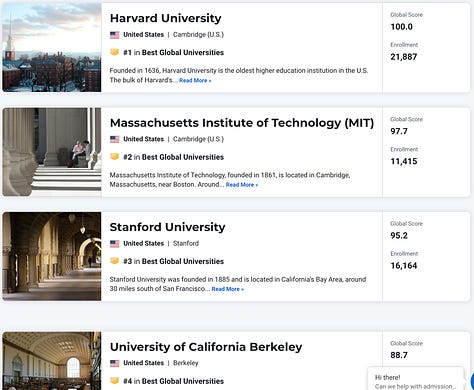

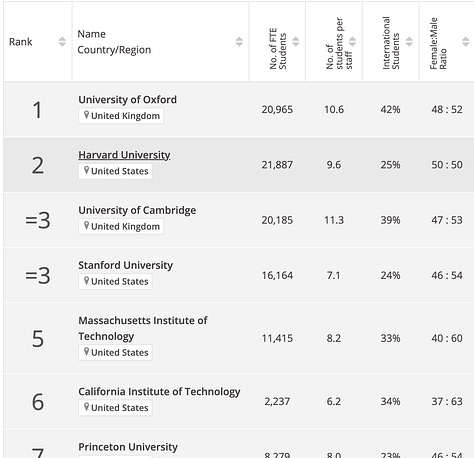

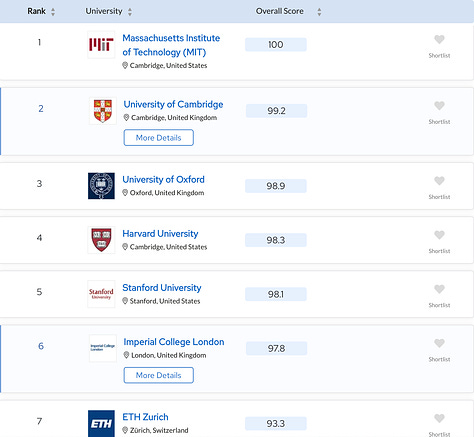

University rankings are an attempt to objectively evaluate the merit and success of universities, comparing them across an entire country, or more commonly, across the globe. Although various such rankings exist, those by Times Higher Education (THE), U.S. News & World Report and QS are among the most popularly used.

From a brief glance, it’s clear that the same institutions dominate the top ten spots across all three rankings. Prestigious and world-renowned institutions like MIT, Cambridge, Oxford, Harvard and Stanford consistently top the list every year.

The calculations behind the rankings are generally proprietary, never released to the public or even participating institutions. Nonetheless, we know that a significant portion, generally over 50% of the score, comes from research impact alone. Other factors may include student to teacher ratios, retention rates, selectivity, employer reputation and alumni donations. Yet, as we’ll dive into the next section, the majority of the score has either direct or indirect biases at play, which make the final rankings unfair for a large number of institutions.

What’s wrong with university rankings?

Theoretically, university rankings provide an objective measure of the relative success and importance of universities. However, in practice, university rankings use a limited set of measures that don’t necessarily encompass the various means by which an institution can be deemed impactful and successful.

That’s why many universities — with the South Korean cohort being the most recent — don’t want to participate in the rankings. Last year, a slew of law schools abandoned the US News and World Report (USWR) rankings, including prestigious universities such as Yale, Harvard, Columbia, Stanford, Duke and Georgetown. Some of their undergraduate schools, like Columbia’s, also dropped out. This year, medical schools are following suit, like Duke and Harvard. Harvard’s medical school dean, George Q. Daley, explains the mass exodus simply:

“Rankings cannot meaningfully reflect the high aspirations for educational excellence, graduate preparedness, and compassionate and equitable patient care that we strive to foster in our medical education programs.” - George Q. Daley

So, how is it these allegedly “objective” rankings came to be so unreliable? Various issues and biases exist within the measures. In reality, these issues are interlinked and complex, but a few dominate all others in terms of how strongly they impact the resulting scores:

Biased research-impact measures (i.e. international research is weighed more heavily than local impact)

Lack of data quality (i.e. data is not verified by institutions; those who don’t provide data are penalised)

Lack of transparency and conflicts of interest for ranking organisations (i.e. ranking organisations also providing consulting services; calculations are not made public)

1. Biased measure of research impact

The key issue with calculation of global rankings is the over-emphasis on research and how such research success is evaluated. Take QS, or the Quacquarelli Symonds report. 60% of its score is based on research impact: 40% for academic reputation and 20% for citations per faculty. Times Higher Education (THE) is similar, with 30% for research (based on volume, income, and reputation) and 30% based on citations.

There’s certainly merit to using research as a key factor in evaluating a university’s impact. But there are obvious and severe biases to the calculation. For starters, rankings reward certain journals over others, and primarily English papers over non-English. For example, Shanghai Ranking ARWU awards 30% based on Nobel Prizes and Fields Medals, and 20% based on publishing in Nature and Science alone.

One of the biggest criticisms here is that impactful research doesn’t necessarily mean high-ranking English-focused journals. Local or country-specific impacts, like national clinical guidelines or research that supports the local agricultural production play no role in these measures, even though they also constitute substantial impact.

2. Lack of data quality

If the issues with how the scores are calculated isn’t troubling enough, there are also flaws within the methodology, particularly with data collection and quality assessment.

The organisations conducting these rankings solicit data from participating institutions. If the university doesn’t wish to comply, and refuses to provide data, they may still be ranked using aggregated data, and in a fashion that penalises the institution. In particular, they may simply score the institution at “one standard deviation below the mean on numerous factors for which [they] can’t find published data.” That’s according to the president of Sarah Lawrence College at the time, who wrote in 2007 about this issue, positing that complying by providing the requested data is the only way to at least try and be ranked fairly.

Even if institutions disagree with the validity of rankings, they are unable to avoid the game. This explains why some institutions even go so far as to falsify data they provide to the reporting agencies, like when the dean of Temple University falsified years of data sent to the US News and World Report. His misconduct bumped the university from 53rd to seventh in a matter of three years.

3. Lack of transparency

The argument over fairness of rankings would look quite different if the calculations were openly discussed. The lack of transparency regarding precisely how these scores are computed makes it especially difficult to determine how fairly biases are accounted for. For example, 20% of the the US News and World Report rankings use the results of Clarivate’s Academic Reputation Survey. Although the survey adjusts for country disparity and “geographic distribution of researchers”, the exact methods are not reported. Unsurprisingly, the vast majority of respondents report on North American universities, which dominate most of the top global spots.

QS states on their site that “QS prides itself on being transparent.” However, they offer only one article describing their methodology, which lacks any precise calculations. Nor do they release the data used.

Even more serious is the criticism that the reporting organisations purposefully distort university rankings, based on personal interest. Although it’s not often discussed as a source of bias, all the top ranking organisations provide university services such as advertising and consulting. Last year, after examining the QS rankings of 28 Russian universities, Igor Chirikov found that those with QS-related contracts tended to have higher rankings. This, in addition to other suspicions regarding QS conflicts of interest, creates much skepticism regarding the trustworthiness of their rankings.

So, what should be done about university rankings?

As with any multi-faceted, global problem, the solution doesn’t necessarily boil down to a single, easy route. Instead, it requires a collective effort, various initiatives, and even action from audiences you may least expect.

Of course, this isn’t a comprehensive list. Researchers are continually working on novel approaches and methodologies. Conferences like the upcoming AIMOS 2023 Conference provide a centralised location for such innovations to be shared.

Change the rankings

Given the various biases that exist in the current rankings, new approaches focus on updating how the scores are computed.

Academic Influence, for one, acknowledges the existing issues with rankings and attempts to address them by providing entirely new rankings based on academic influence. Moreover, they leave more power in the hands of the user (primarily prospective students), allowing them flexibility in what they prioritise via a custom do-it-yourself ranking tool. Their Custom College Ranking tool makes the entire system more transparent by letting users filter on certain characteristics, such as discipline, graduation rate and retention.

However, solutions in this category may still not be ideal. They suffer from the same lack of transparency issue as existing systems, and continue to rely heavily on citation count and other measures that are in and of themselves potentially biased or unreliable.

Fix the measures

One important, yet subtle, issue that pervades rankings is the fact that research influence often boils down to citations. With the biased citation game afoot, and corresponding issues in academic integrity such as paper mills and citation hacking, the very measure itself is compromised. Thus, correcting the potential biases in this measure is critical to fixing scores derived from citation count. Here, one of the most compelling solutions is the concept of random audits.

Dr. Adrian Barnett, a meta-researcher at Queensland University of Technology, imagines a system wherein random audits can be used across universities to ensure that the highest standards of publication are being met. Similar to his concept of fraud police, these audits provide a way to hold institutions accountable for the quality of research coming out. For example, a certain number of papers can be randomly selected each year from institutions to undergo a scrupulous quality audit. The auditors would ensure the merit of the research, and catch common issues like manipulated peer reviews or citation hacking.

Of course, such a solution requires enormous resources and commitment, but may be a promising long-term solution to at least ensuring that research impact measures used in rankings are accurate. Even more importantly, it enables institutions to be accountable to themselves and their own standards, which would be the alleged goal of any ranking in general.

Be “more than your rank”

The more we unpack ranking, the more we see its instability in accurately attesting to the relative impact of universities across the globe. This is especially noticeable when we give attention to the more unique, distinguishing features of particular institutions. It becomes clear that no single ranking can capture the diversity of defining measures of success.

That’s why the More Than Our Rank campaign was born — an initiative to showcase universities in ways that rank simply can’t capture. Many institutions have already joined the campaign, such as Queensland University of Technology in Australia, the Izmir Institute of Technology in Turkey, and Unity Health Toronto in Canada. Institutions who join demonstrate a “commitment to responsible assessment and to acknowledging a broader and more diverse definition of institutional success.”.

Turn to prospective students

Any progress in establishing new, reliable rankings, or even entirely new systems of defining academic success, may easily go unnoticed so long as the existing rankings are still treated as the golden standard. Here, it’s important to remember who these rankings are designed for. University administrators uphold these rankings, but they are not the audience. Instead, it’s prospective students who give weight and credence to the alleged reputation and scores. By using ranking as a part of their decisions when attending universities, they are both the instigators and unwitting victims to skewed measures of success.

Thus, one of the most critical components in overtaking existing university rankings is communicating to prospective students what these rankings entail, and more importantly, miss out on. By providing useful resources and more detailed, personalised measures of success, prospective students will be better informed and able to make choices based on their unique priorities.

At best, university rankings ignore the diverse measures of success and impact institutions have across the globe — factors which arguably mean more for prospective students than research output alone. At worst, continuing to rely on such rankings may exacerbate the “brain drain” from South to North, leading students to attend high-ranking universities in the U.S. and UK over their local counterparts.

As universities publicly denounce rankings and new measures loom on the horizon, we may be well-positioned to witness a global change in how rankings are perceived. Yet, perhaps it comes down not to academics or anyone within the system, but rather prospective students who are the key clientele for these scores.

This edition of The Scoop has been co-created with meta-researcher and statistician Adrian Barnett. If you're an expert in a topic pertaining to modern academic research, please get in touch! Your voice helps us better communicate these ideas with the broader research community.Resources

Association for Interdisciplinary Metaresearch & Open Science (AIMOS) Conference, November 21-23 in Brisbane, Australia

Korean universities unite against QS ranking changes, July 2023

The trouble with university rankings (1), June 2023

College Rankings Held Hostage: The Undeserved Monopoly of US News Rankings, May 2023

Offline: The silencing of the South, March 2023

There’s Only One College Rankings List That Matters, March 2023

Rhode Island School of Design Withdraws from U.S. News & World Report’s Annual Rankings, February 2023

20 years on, what have we learned about global rankings?, 2022

Does conflict of interest distort global university rankings?, 2022